“Philosophically, intellectually—in every way—human society is unprepared for the rise of artificial intelligence,» states Henry Kissinger in this interesting article. Inspired by the announcement of the computer program that would soon challenge international champions in the game Go, the former US secretary of state reflects on the challenges humanity faces amid the speed development of artificial intelligence.

It is true that in memorization and computation, AI is likely to win any game played against humans, and I agree with the vital distinction Kissinger makes that for our purposes as humans, the games are not only about winning; they are about thinking.

That is exactly why I have not yet started freaking out about robots taking over. And will not, at least not before I run into hundreds of them wondering idly around town, gossiping in the cafés, booing the bad jokes made by some nihilist robot stand-up comedian, or romantically gazing at the sunset. Until then, they might be better at winning the game, but without understanding why they are doing it, the game is still ours.

Yes, games are about thinking. As such, they are an essential part of the learning process, mainly because they help us develop one of the most crucial learning elements; that is chance. Chance is a necessary aspect of second-order learning where, through exploration, new information comes to light. Without chance, we would be kept in the exploitation loop with limited options to move forward and survive as species.

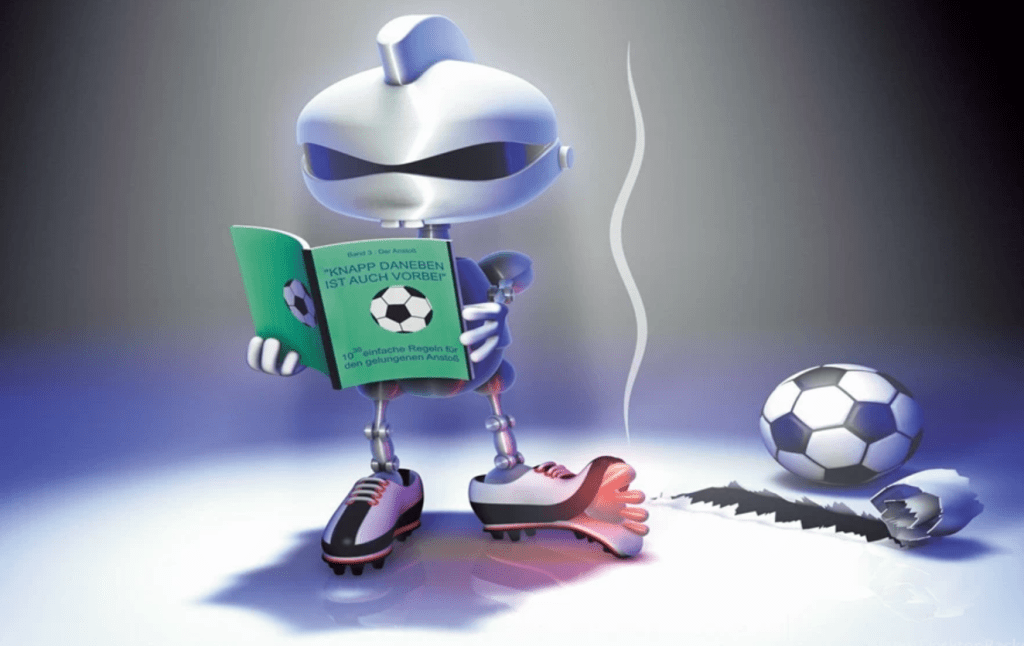

Yes, AI can beat us in Go, chess, and probably, in some near future, in football. But having AI robots playing against each other would be an endless penalty shootout. They don’t get the meaning of saving or scoring the penalty. And without thinking, there is no winning (or loosing).

Picture this: The game goes into a penalty shootout. Both of the goalkeepers and all of the strikers are the latest model of AI-driven robotic creation that the same tech company has designed. They are assembled of the same hardware and run on the same computing power and software. There is no option for any random behavior which might lead to chance or any other outcome outside the already predetermined script. So; What happens? Who wins?

That is a situation that perhaps will not be so hypothetical in the near future, given the AI industry’s speed advance. But what happens with the program script if both the goalkeepers are programed to save and the strikers are programed to score? Whose script will prevail over the other? The option of having the goalkeepers save and the strikers score each penalty kick is not viable. At least not within the same game (and not within the limited number of the physical dimensions we are aware of).

Illustration: desktopbackground.org

Therefore, unless there is a deliberate software malfunctioning in one or more players, the game simply can’t be played. Unless, of course, the winning side has been prepicked in the programming. But that is more of an ethical than a technical question.

The other way of breaking the predefined pattern is by introducing chance. But, how do you program a chance? And if it’s programmed, can it still be considered a chance?

While I am very much looking forward to having access to driverless cars service and other conveniences of AI advances that will lead to a more sustainable future, I remain skeptical of the speed through which the digital world is becoming supplementary to some of the intrinsic human capabilities and experiences. I feel deeply concerned about its effect on the human ability to learn.

In our everyday lives, we have become increasingly used to trusting our decisions to technology. We use apps to help us with various tasks ranging from even the most basic arithmetic calculations to choosing life partners. At the same time, our organizations base some of the most critical decisions on AI. From investment portfolios to talent search, digital applications are becoming responsible for the decision-making processes that are running operations. The importance these digital tools are having is increasingly placing human relations, experience, and interaction into the back seat.

And there is another side to it. The decision-making is triggered by events and their speed. The immediate answer has become imperative in almost everything we do. And ‘everything’ is more and more becoming dependent on the internet. But the internet, while undoubtfully has provided us with incredible social progress, inhibits one of our intrinsic capabilities. It inhibits reflection. That sole cognitive ability differentiates us both from the rest of the living and the AI world. The ability to reflect systemically on the events, the underlying circumstances that led to them, and the knowledge created in the process, is one of the pillars of the Human Condition.

Reflection is the essential part of the reason why there is a vast difference between knowledge and knowing. Here are some interesting thoughts Henry Kissinger has on the issue: “The digital world’s emphasis on speed inhibits reflection; its incentive empowers the radical over the thoughtful; its values are shaped by subgroup consensus, not by introspection. For all its achievements, it runs the risk of turning on itself as its impositions overwhelm its conveniences.”

One of the main impositions is entrusting ever-greater parts of our memory and cognitive ability to process knowledge to the technology. While doing so, we are increasingly focusing more on the events than the underlying circumstances and this, as Peter Senge would put it, distracts us from seeing the longer-term patterns of change. One of these patterns is the slow but increasingly faster climate change. Focusing solely on the events keeps us in the hectic reactive mode without ever understanding what is driving the events in the first place. The only way to get ahead of the events is by following Senge’s advice and begin learning to see slow, gradual processes that require slowing down our frenetic pace and paying attention to the subtle as well as the dramatic.

Otherwise, we’ll keep on (hysterically) pending more on the effects than on the causes. This leads to our attitude becoming more and more reactive. It favors the victim mentality while inhibiting individual responsibility. The more algorithms stand between us, the more control we transfer from ourselves to the powers that control the algorithms. The fewer interactions we have among us, the less power we hold individually.

The complexity of our world is constantly and rapidly increasing. Entrusting our future solely to artificially designed predictability as a remedy for the natural randomness may accelerate our responses. But without the understanding of the underlying structures that sustain our world, these responses will not turn into solutions.

Chance is a fundamental part of these structures. It is the ignition of change. Without it, no new knowledge gets created. Without chance, we get access to knowledge but lose the chance of knowing. Without chance, we would know everything, but that would be all. We would never get to know what we don’t know today. We would never get to know who won the penalty shootout.